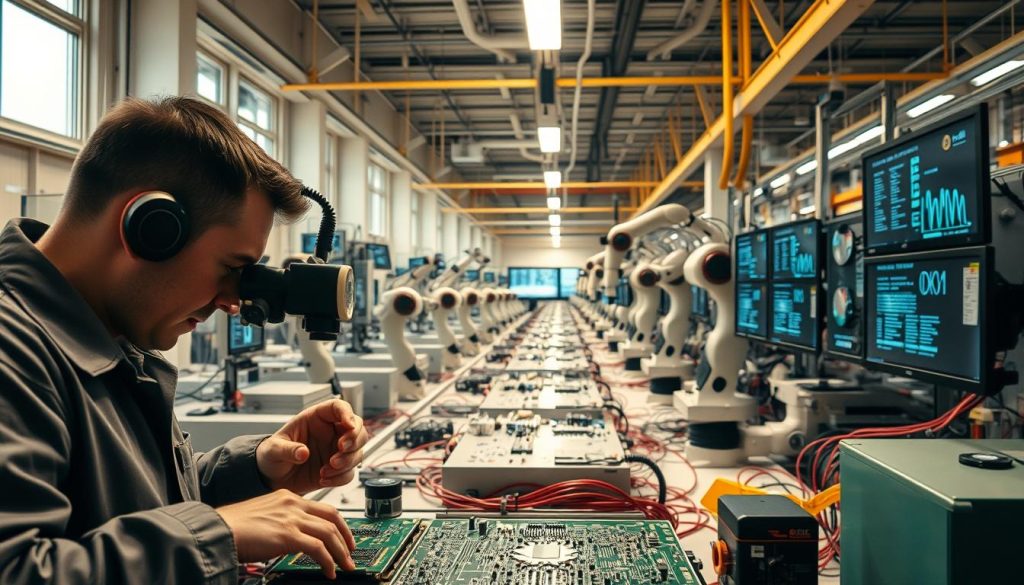

Deep learning has fundamentally changed how manufacturers identify product flaws, enabling vision systems that catch surface defects, dimensional errors, and assembly problems with accuracy rates consistently above 99%. While human inspectors lose focus after 20 to 30 minutes of repetitive visual checks, AI-powered cameras process thousands of items per minute without any decline in precision.

defect detection system analyzing products on a manufacturing line" width="750" height="428" srcset="https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-1024x585.jpeg 1024w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-300x171.jpeg 300w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-768x439.jpeg 768w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection.jpeg 1344w" sizes="(max-width: 750px) 100vw, 750px" />

defect detection system analyzing products on a manufacturing line" width="750" height="428" srcset="https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-1024x585.jpeg 1024w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-300x171.jpeg 300w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection-768x439.jpeg 768w, https://opsiocloud.com/wp-content/uploads/2025/11/deep-learning-for-defect-detection.jpeg 1344w" sizes="(max-width: 750px) 100vw, 750px" />

Manufacturing defects cost U.S. industries an estimated $2 trillion annually in recalls, wasted materials, and lost customer trust, according to the American Society for Quality. Traditional manual inspection catches roughly 80% of visible flaws, leaving a significant gap that neural network vision systems now close. At Opsio, we help manufacturers deploy deep learning inspection solutions that integrate with existing production lines to reduce waste, minimize downtime, and protect brand reputation.

Key Takeaways

- AI-powered vision systems detect surface defects, dimensional deviations, and assembly errors with over 99% accuracy in production environments.

- Convolutional neural networks (CNNs) and Vision Transformers extract visual features at multiple scales, from hairline cracks to large structural anomalies.

- Automated inspection processes thousands of items per minute without the accuracy decline caused by human fatigue.

- Transfer learning and few-shot methods reduce data requirements, making deployment practical even when defect samples are scarce.

- Integration with predictive quality control pipelines enables real-time corrective action directly on the production line.

How Neural Networks Detect Manufacturing Defects

Neural networks learn to spot defects by training on labeled images of acceptable and flawed products, building an internal representation of what "normal" looks like and flagging anything that deviates. The process pairs high-resolution imaging hardware with software models that automatically learn hierarchical visual features.

Unlike rule-based machine vision, which requires engineers to hand-code inspection criteria for every defect type, deep learning models generalize from examples. A convolutional neural network (CNN) identifies edges and textures in its early layers, combines them into shapes in intermediate layers, and recognizes complete defect patterns in its final layers. This hierarchical feature extraction is what allows the same underlying technology to work across diverse product types and anomaly categories.

More recent architectures such as Vision Transformers (ViTs) take this further by capturing long-range spatial dependencies across an image. Where a CNN processes local patches, a transformer's self-attention mechanism relates distant regions. That capability is critical for detecting defects that span large areas or appear in unexpected locations, such as warping across a metal panel or inconsistent coating thickness.

In 2025 and 2026, foundation models pretrained on billions of images (such as DINOv2 and Segment Anything Model 2) have entered the manufacturing space, allowing companies to fine-tune highly capable feature extractors on relatively small sets of factory-specific defect images. This dramatically shortens the path from pilot to production-grade inspection.

Classification, Detection, and Segmentation

Three core computer vision techniques serve different inspection needs: classification for pass/fail decisions, detection for locating anomalies, and segmentation for mapping exact defect boundaries at the pixel level.

Choosing the right approach depends on what the production line requires:

- Classification assigns a single label (pass or fail) to an entire image. It is the fastest method and works best for high-volume, single-product lines where location details are secondary.

- Object detection identifies and localizes multiple anomalies with bounding boxes. It provides spatial context that helps operators understand defect distribution and prioritize corrective actions.

- Semantic segmentation delivers pixel-level precision, creating detailed maps of exact defect boundaries and dimensions. This is essential in aerospace and electronics manufacturing, where defect geometry directly impacts safety and compliance.

| Technique | Best Use Case | Processing Speed | Precision Level |

|---|---|---|---|

| Classification | High-volume pass/fail screening | Fastest (sub-millisecond) | Moderate |

| Object Detection | Multi-anomaly identification | Moderate (5-20 ms) | High |

| Semantic Segmentation | Critical boundary analysis | Slower (20-100 ms) | Highest (pixel-level) |

Many production systems combine these methods in multi-stage pipelines. A fast classifier screens every item, and flagged products pass through a segmentation model for detailed analysis. This layered approach balances throughput with precision and keeps computational costs manageable.

Preparing Training Data for Reliable Models

Model performance depends directly on training data quality, and poorly labeled or imbalanced datasets produce unreliable inspectors regardless of how sophisticated the architecture is.

Image Acquisition Standards

Consistent lighting, camera resolution, and product positioning are non-negotiable. Shadows, glare, and angle variations introduce noise that degrades model accuracy. Industrial setups typically use ring lights or diffuse lighting enclosures paired with fixed-mount cameras at controlled distances. Resolution requirements vary by application: PCB inspection often demands 10+ megapixel cameras, while large-part automotive inspection may work with 5 megapixels.

Labeling and Annotation

Domain experts annotate images with precise classifications, bounding boxes for detection tasks, or pixel masks for segmentation. Using multiple annotators with cross-validation reduces subjective inconsistency. Validation and test sets must remain completely separate from training data to ensure honest performance metrics.

Handling Class Imbalance

In high-quality manufacturing, defective samples may represent less than 1% of production output. This creates severely imbalanced datasets that bias models toward predicting "pass" on everything. Proven strategies include:

- Data augmentation (rotation, flipping, color jittering, elastic deformation) to expand defect samples realistically

- Synthetic defect generation using diffusion models or generative adversarial networks (GANs) to create photorealistic defect images

- Oversampling techniques adapted for image data to rebalance the training distribution

- Loss function weighting to penalize missed defects more heavily than false alarms

Manual Inspection vs. AI-Powered Quality Control

AI-based inspection eliminates the consistency and fatigue problems inherent in manual quality checks while delivering accuracy that exceeds human capability by a measurable margin.

Where Manual Inspection Falls Short

Human inspectors experience accuracy decline after 20 to 30 minutes of repetitive visual tasks, according to ergonomics research published in the journal Ergonomics. Subjective judgment varies between shifts and individuals. Physical limitations prevent detecting microscopic anomalies, and manual methods create throughput bottlenecks on high-speed lines.

What AI Vision Systems Deliver

Automated vision inspection applies identical evaluation criteria to every item, operates continuously without performance degradation, and scales to match production speeds. Research published in the Journal of Manufacturing Systems shows that deep learning inspection achieves accuracy rates above 99% in controlled production environments.

| Aspect | Manual Inspection | AI Vision System |

|---|---|---|

| Consistency | Variable, declines with fatigue | Uniform across all shifts |

| Throughput | Limited by human processing speed | Thousands of items per minute |

| Accuracy | Approximately 80%, drops over time | Above 99%, sustained indefinitely |

| Data Output | Minimal documentation | Full analytics and traceability |

| Microscopic Detection | Severely limited | Sub-millimeter precision |

| Cost at Scale | Linear with volume | Near-fixed after deployment |

A hybrid approach often works best in practice: AI handles routine high-speed screening while human experts review edge cases and manage the overall process. This combination leverages the strengths of both and creates a more resilient quality control framework.

Building and Deploying a Detection Model

Successful deployment follows a structured process from requirement gathering through pilot validation to full production rollout, with architecture selection driven by the specific inspection challenge and production constraints.

Pre-Trained Models vs. Custom Architectures

Pre-trained models such as ResNet, EfficientNet, or YOLO variants offer rapid deployment through transfer learning. They work well for common defect types where the visual patterns resemble those in large public datasets. Custom architectures become necessary for highly specialized inspections involving unusual materials, unique product geometries, or extreme precision requirements.

Key selection criteria:

- Available training data volume and defect diversity

- Required accuracy and acceptable false positive rate

- Real-time inference latency constraints

- Long-term maintenance and retraining capacity

- Regulatory documentation and audit trail requirements

Implementation Stages

- Requirements analysis: Define specific anomaly types, accuracy targets, throughput needs, and production environment constraints.

- Data collection and preparation: Establish image acquisition protocols and build annotated datasets with clear quality standards.

- Model selection and training: Choose architecture, train on prepared data, validate against holdout sets, and tune hyperparameters.

- Pilot deployment: Run the model alongside existing inspection for measurable A/B comparison over a defined period.

- Production integration: Connect to surface defect detection infrastructure, configure alerting, rejection mechanisms, and feedback loops.

- Continuous improvement: Monitor key metrics (accuracy, false positive rate, throughput), retrain on new defect patterns, and expand coverage to additional product lines.

Integrating Vision Systems into Production Lines

Retrofitting AI vision into an existing factory requires strategic camera placement, specialized lighting, edge computing hardware, and software connectivity that complements rather than disrupts operational rhythms.

Hardware considerations include camera resolution (typically 5 to 20 megapixels for industrial inspection), lens selection matched to field-of-view requirements, and compute resources capable of real-time inference. Edge AI processors such as NVIDIA Jetson or Intel Movidius handle inference locally, eliminating the latency associated with cloud-based processing and keeping sensitive production data on-premises.

Software integration connects the vision system to manufacturing execution systems (MES), quality management databases, and operator dashboards. Real-time alerts trigger automated rejection mechanisms or line stops when critical defects appear. Standard industrial protocols such as OPC UA and MQTT ensure interoperability with existing factory automation infrastructure.

Industry Applications and Use Cases

AI-powered visual inspection adapts to virtually any manufacturing sector, with each industry benefiting from models trained on its specific defect categories and compliance requirements.

Automotive Manufacturing

Vision systems identify paint imperfections, panel misalignment, weld quality issues, and component installation errors. High-speed cameras inspect vehicles at full production line pace without creating bottlenecks. Multi-camera setups capture 360-degree coverage of complex assemblies.

Aerospace and Defense

Critical examination of composite structures, turbine blades, and precision components demands the highest accuracy. Defects in aerospace parts can compromise flight safety, making pixel-level segmentation essential. Systems must meet stringent traceability and compliance requirements under standards such as AS9100.

Electronics and Semiconductors

Automated optical inspection (AOI) of printed circuit boards verifies component placement, solder joint integrity, and trace continuity. Microscopic analysis detects issues invisible to the human eye at production speeds of thousands of boards per hour. Deep learning PCB inspection continues to outperform traditional AOI in catching novel defect types.

Textiles and Fabrics

Fabric defect detection identifies weaving faults, stains, holes, and color inconsistencies across high-speed textile production lines. Pattern recognition models handle the complexity of woven and knitted structures that challenge conventional rule-based systems.

Pharmaceuticals and Medical Devices

Contamination detection, packaging integrity verification, and dimensional validation under strict regulatory oversight from bodies such as the FDA. These applications demand thorough audit trails, validation documentation, and deterministic test protocols.

Common Challenges and Practical Solutions

Real-world deployment faces data scarcity, environmental variability, and integration complexity, but each challenge has proven engineering solutions.

- Limited defect samples: Few-shot learning, self-supervised pretraining, and synthetic data generation through diffusion models reduce the need for large collections of real defect images. Foundation models pretrained on diverse visual data require as few as 50 to 100 labeled defect images for effective fine-tuning.

- Environmental variability: Lighting fluctuations, temperature changes, and vibrations affect image quality. Controlled acquisition environments combined with aggressive data augmentation during training build model resilience to real-world conditions.

- Computational constraints: Model optimization through quantization, pruning, and knowledge distillation reduces memory footprint and inference latency for edge deployment. Modern techniques achieve 4x to 8x compression with less than 1% accuracy loss.

- Legacy system integration: Middleware solutions bridge modern vision APIs with older SCADA and PLC systems through standard industrial protocols (OPC UA, MQTT, Modbus TCP).

- Model drift: Production conditions change over time as materials, suppliers, and processes evolve. Continuous monitoring with automated retraining pipelines ensures inspection accuracy does not degrade as conditions shift.

Emerging Advances in AI-Powered Inspection

The field is advancing rapidly, with foundation models, multi-modal sensing, and federated training reshaping what automated quality control can achieve in 2026 and beyond.

Key developments reshaping the industry:

- Foundation models and zero-shot detection: Large pretrained vision models can identify defect types they were never explicitly trained on, using natural language descriptions of the anomaly. This dramatically reduces time-to-deployment for new product lines.

- Self-supervised anomaly detection: Models that learn "normal" appearance from unlabeled production images and flag deviations without requiring any labeled defect data. This approach is gaining traction in industries where defect samples are extremely rare.

- Multi-modal inspection: Combining visual data with thermal imaging, ultrasonic sensing, and X-ray analysis for comprehensive product integrity assessment that catches internal flaws invisible to cameras alone.

- Federated learning: Collaborative model training across multiple factory sites without sharing proprietary production data, enabling manufacturers to benefit from collective experience while protecting intellectual property.

- Edge AI standardization: Dedicated inference chips from NVIDIA, Intel, and Qualcomm deliver real-time performance at low power consumption, making edge deployment the default rather than the exception.

| Innovation | Maturity (2026) | Expected Mainstream Adoption |

|---|---|---|

| Foundation models for inspection | Early production deployments | 2027-2028 |

| Edge AI processing | Broadly adopted | Already standard in new installations |

| Self-supervised anomaly detection | Pilot to early production | 2027-2028 |

| Multi-modal inspection | Research and pilots | 2028-2030 |

| Federated learning | Cross-company pilot projects | 2028-2029 |

Getting Started with Automated Defect Detection

Implementing AI-powered inspection begins with understanding your specific quality challenges, the data you already have, and your production environment constraints. Opsio provides end-to-end support from initial feasibility assessment through full production deployment.

Our typical engagement follows this path:

- Assess current inspection workflows and identify the highest-impact opportunities for automation

- Conduct feasibility studies using your actual product images and defect samples

- Develop proof-of-concept models validated against your existing quality standards

- Integrate with existing production infrastructure and defect detection systems

- Provide ongoing optimization, model retraining, and coverage expansion as production evolves

Contact our team to discuss how automated visual inspection can reduce defect rates and operational costs across your manufacturing operations.

Frequently Asked Questions

What accuracy can deep learning achieve for defect detection?

Production-deployed deep learning systems routinely achieve accuracy rates above 99% for trained defect categories. Actual performance depends on image quality, defect visibility, and the volume and diversity of training data. High-contrast surface defects typically reach the highest accuracy, while subtle internal flaws may require supplementary sensing methods such as thermal or X-ray imaging.

How much training data is needed to build a reliable detection model?

With transfer learning from a pretrained model, a minimum of 200 to 500 labeled images per defect category is a practical starting point. Foundation models available in 2026 can achieve usable accuracy with as few as 50 labeled examples per class. Synthetic data generation and augmentation techniques can expand small datasets further. The more diverse the training conditions (lighting, angle, product variant), the more robust the deployed model.

Can AI inspection integrate with existing production lines?

Yes. Modern vision systems are designed for retrofit integration using standard industrial cameras, edge computing hardware, and protocol connectors (OPC UA, MQTT, REST APIs). Integration typically begins with a pilot on a single line before scaling across the facility. Most installations are completed within 4 to 12 weeks depending on complexity.

Which industries benefit most from automated visual inspection?

Automotive, aerospace, electronics, pharmaceuticals, metals, textiles, and food packaging see the largest impact. Any manufacturing process where surface quality, dimensional accuracy, or assembly correctness is critical can benefit, particularly high-volume production with strict quality standards and high cost-of-failure.

What is the difference between classification and segmentation in defect detection?

Classification assigns a pass/fail label to an entire image and is fastest for high-throughput screening. Segmentation maps exact defect boundaries at the pixel level, providing precise size, shape, and location measurements. Many production systems use classification first for speed, then apply segmentation to flagged items for detailed analysis and root cause documentation.